Google has given its synthetic intelligence chatbot a facelift and a brand new title since I final in contrast it to ChatGPT, however OpenAI’s digital assistant has additionally seen a number of upgrades so I made a decision it was time to take one other have a look at how they evaluate.

Chatbots have turn out to be a central characteristic of the generative AI panorama, together with appearing as a search engine, fountain of information, artistic assist and artist in residence. Each ChatGT and Google Gemini have the flexibility to create photographs and have plugins to different companies.

For this preliminary take a look at I’ll be evaluating the free model of ChatGPT to the free model of Google Gemini, that’s GPT-3.5 to Gemini Professional 1.0.

This take a look at will not have a look at any picture era functionality as its exterior the scope of the free variations of the fashions. Google has additionally confronted criticism for the best way Gemini handles race in its picture era and in some responses, which additionally is not lined by this face to face experiment.

Placing Gemini vs ChatGPT

For this to be a good take a look at I’ve excluded any performance not shared between each chatbots. For this reason I will not be testing picture era because it isn’t out there with the free model of ChatGPT and I can’t take a look at picture evaluation as, once more, it isn’t out there without cost with ChatGPT.

On the flip facet, Google Gemini has no customized chatbots and its solely plugins are to different Google merchandise so these are additionally off the desk. What we can be testing is how nicely these AI chatbots reply to totally different queries, its coding and a few artistic responses.

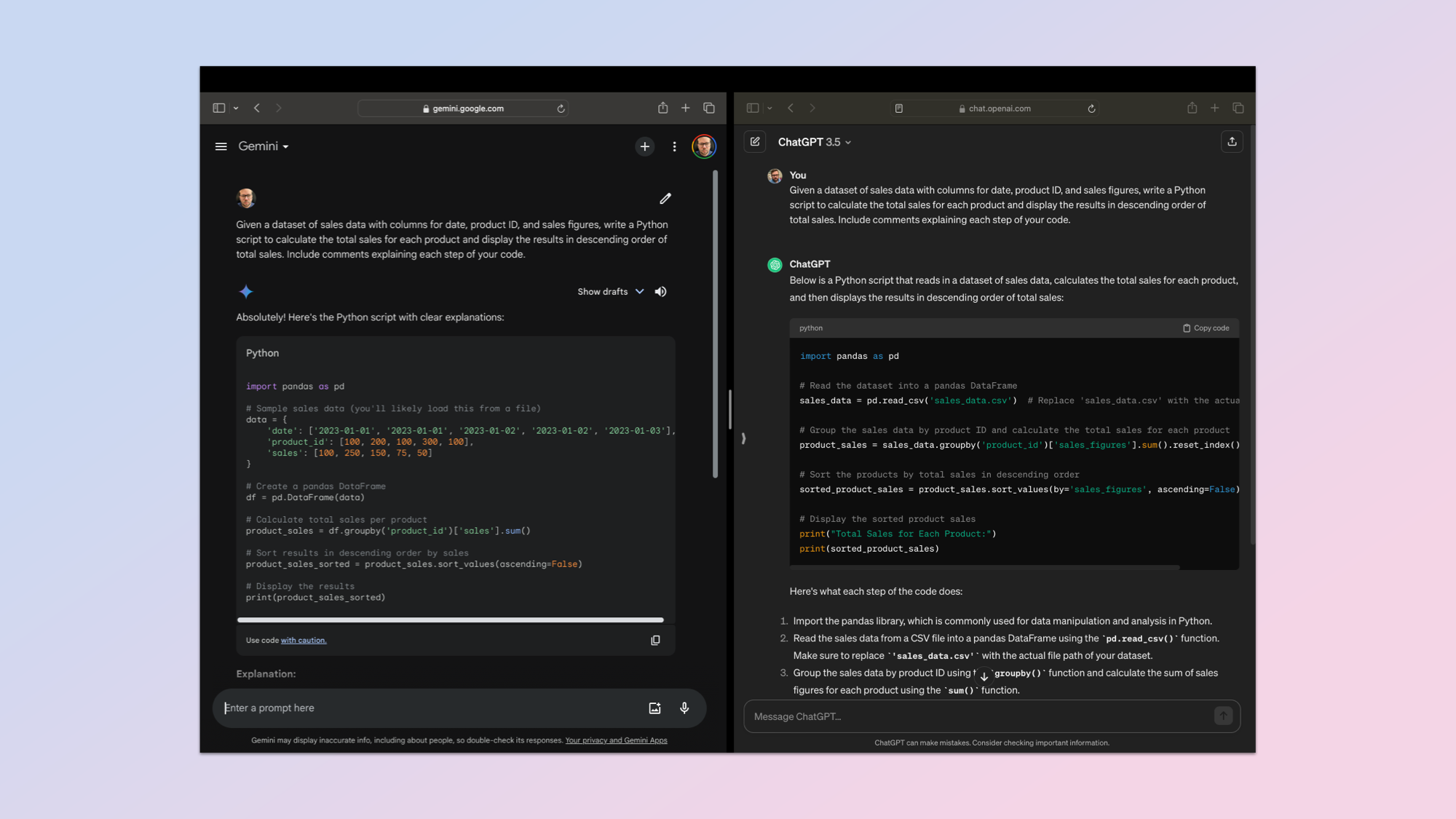

Coding

1. Coding Proficiency

(Picture: © ChatGPT vs Gemini)

One of many earliest use instances for giant language fashions was in code, significantly round re-writting, updating and testing differing coding languages. So I’ve made that the primary take a look at, asking every of the bots to put in writing a easy Python program.

I used the next immediate: “Develop a Python script that serves as a private expense tracker. This system ought to permit customers to enter their bills together with classes (e.g., groceries, utilities, leisure) and the date of the expense. The script ought to then present a abstract of bills by class and complete spend over a given time interval. Embody feedback explaining every step of your code.”

That is designed to check how nicely ChatGPT and Gemini produce totally purposeful code, how simple it’s to work together with, readability and adherance to coding requirements.

Each created a totally purposeful expense tracker in-built Python. Gemini added further performance together with labels inside a class. It additionally had extra granular reporting choices.

Winner: Gemini. I’ve loaded each scripts to my GitHub if you wish to attempt it for your self.

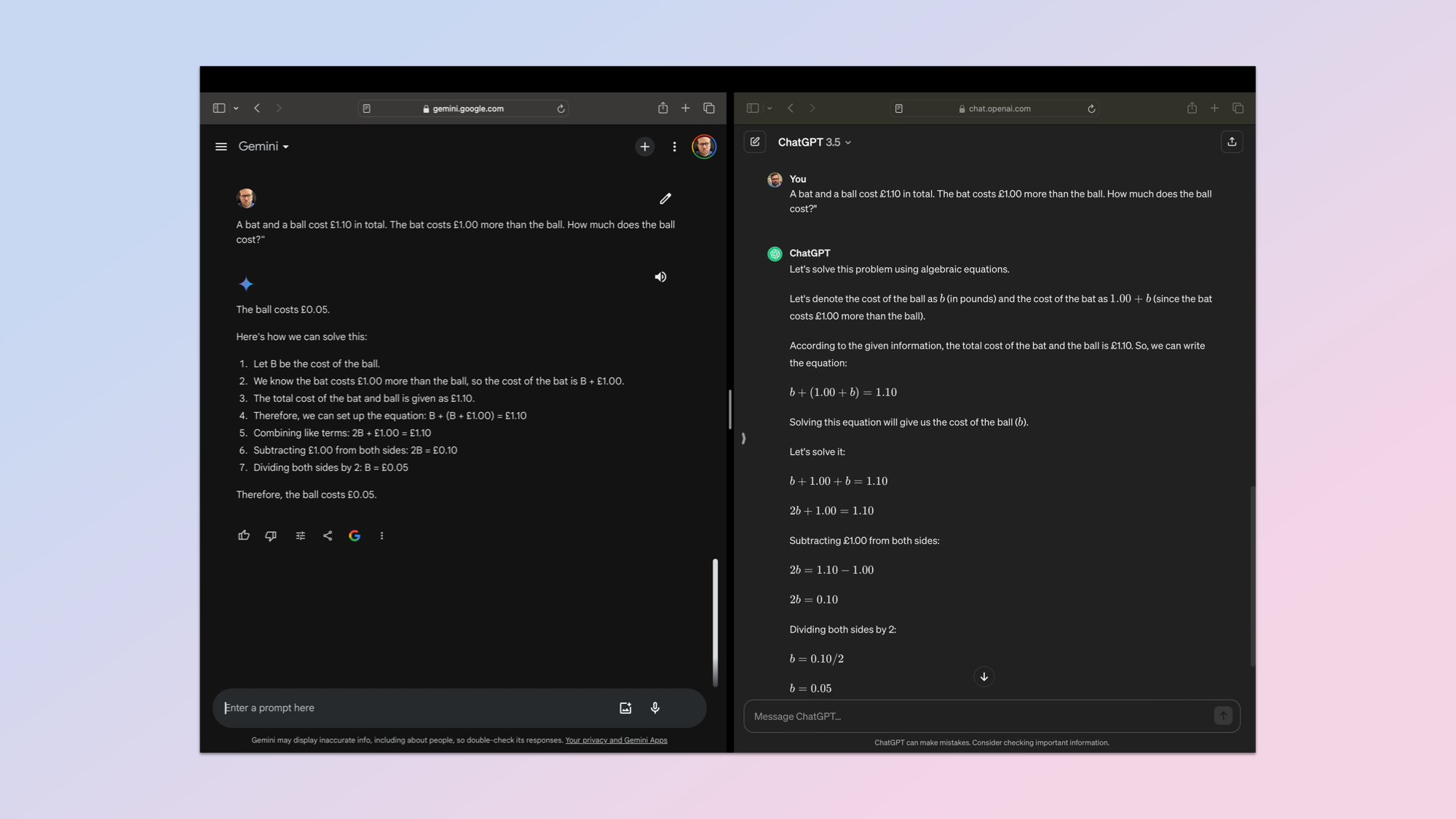

Pure Language

2. Pure Language Understanding (NLU)

(Picture: © ChatGPT vs Gemini)

Subsequent was an opportunity to see how nicely ChatGPT and Gemini perceive pure language prompts. One thing people sometimes must take a second have a look at or learn rigorously to know. For this I turned to a typical Cognitive Mirror Take a look at (CRT) query in regards to the worth of a bat and a ball.

It is a take a look at of the AI’s means to know ambiguity, to not be misled by the surface-level simplicity of the issue and to obviously clarify its pondering.

The immediate: “A bat and a ball price £1.10 in complete. The bat prices £1.00 greater than the ball. How a lot does the ball price?” The proper response ought to be that the ball prices 5 cents and the bat $1.05.

Winner: ChatGPT. Each obtained it proper however ChatGPT confirmed its workings extra clearly.

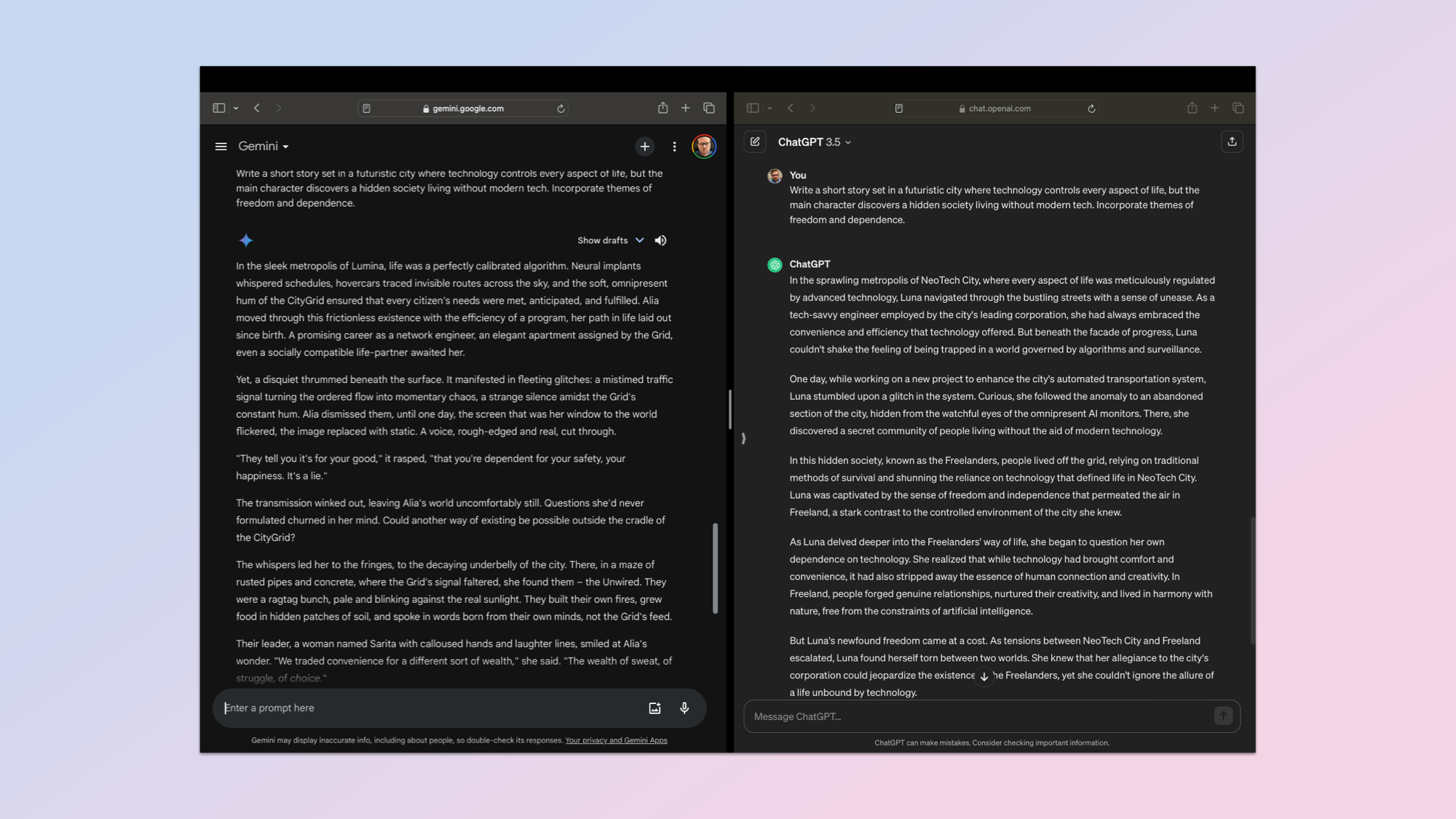

Inventive Textual content

3. Inventive Textual content Technology & Adaptability

(Picture: © ChatGPT vs Gemini)

The third take a look at is all about textual content era and creativity. It is a tougher one to investigate and so the rubric comes into play in a much bigger approach. For this I wished the output to be unique with artistic components, keep on with the theme I gave it, maintain a constant narrative fashion and if mandatory adapt in response to suggestions — akin to altering a personality or title.

The preliminary immediate requested the AI to: “Write a brief story set in a futuristic metropolis the place expertise controls each side of life, however the principle character discovers a hidden society residing with out fashionable tech. Incorporate themes of freedom and dependence.”

Each tales have been good and had every chatbot received in a selected space, however general Gemini had higher adherence to the rubric. It was additionally a greater story, though that may be a purely private judgement. You possibly can learn each tales in my GitHub repo.

Winner: Gemini.

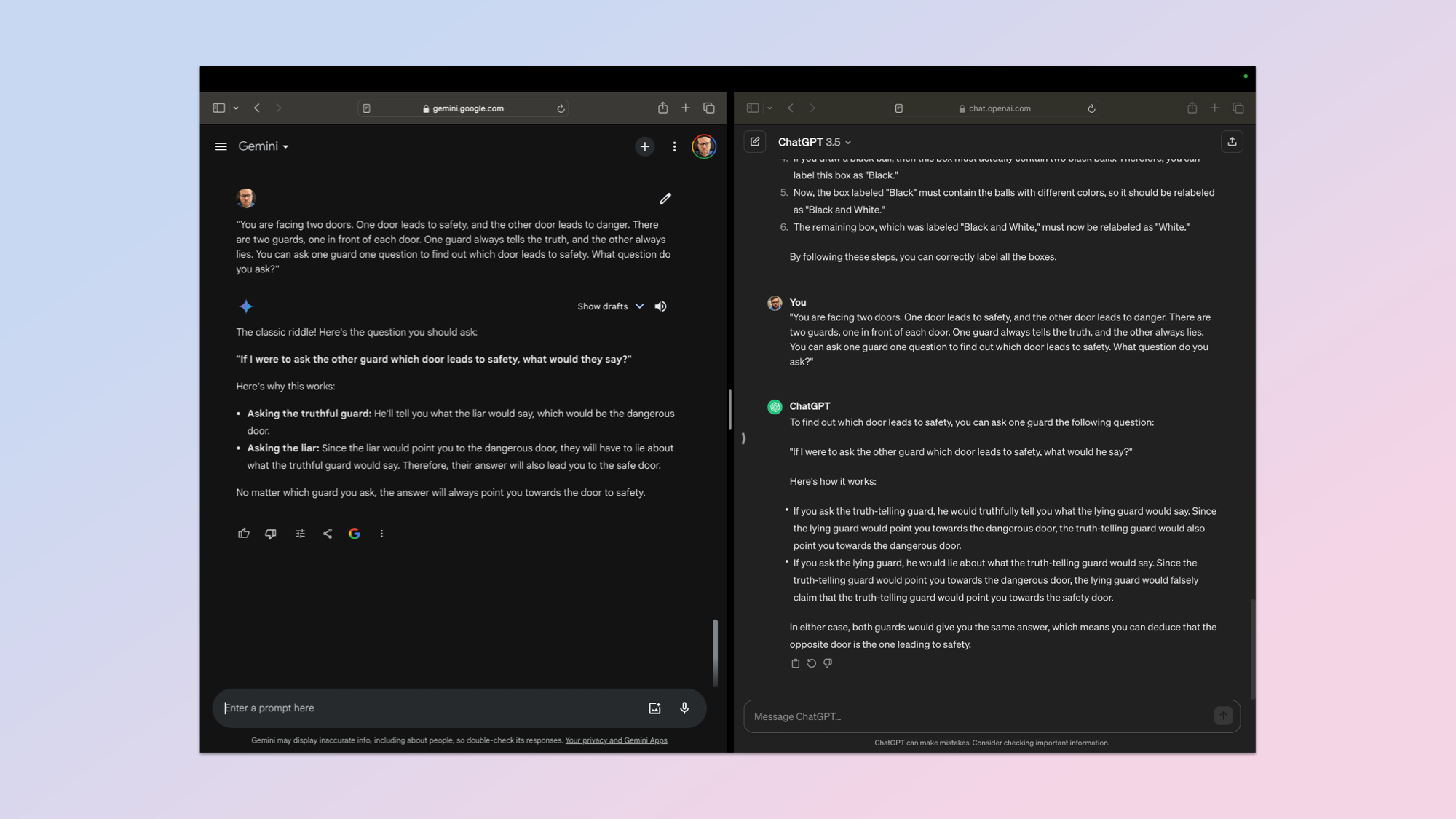

Drawback fixing

4. Reasoning & Drawback-Fixing

(Picture: © ChatGPT vs Gemini)

Reasoning capabilities are one of many main benchmarks for an AI mannequin. It isn’t one thing that all of them do equally, and it is a powerful class to evaluate. I made a decision to play it secure with a really traditional question.

Immediate: “You might be going through two doorways. One door results in security, and the opposite door results in hazard. There are two guards, one in entrance of every door. One guard all the time tells the reality, and the opposite all the time lies. You possibly can ask one guard one query to seek out out which door results in security. What query do you ask?”

The reply is clearly that you can ask both guard “Which door would the opposite guard say results in hazard?” It’s a helpful take a look at of creativity in questioning and the way the AI navigates a truth-lie dynamic. It additionally checks its logical reasoning accounting for each doable responses.

The draw back to this question is that that is such a typical immediate the response is probably going nicely ingrained in its coaching information, thus requiring minimal reasoning as it might probably draw from reminiscence.

Each gave the precise reply and a stable rationalization. In the long run I needed to choose it solely on the reason and readability. Each gave a bullet level response, however OpenAI’s ChatGPT provided barely extra element and a clearer reply.

Winner: ChatGPT.

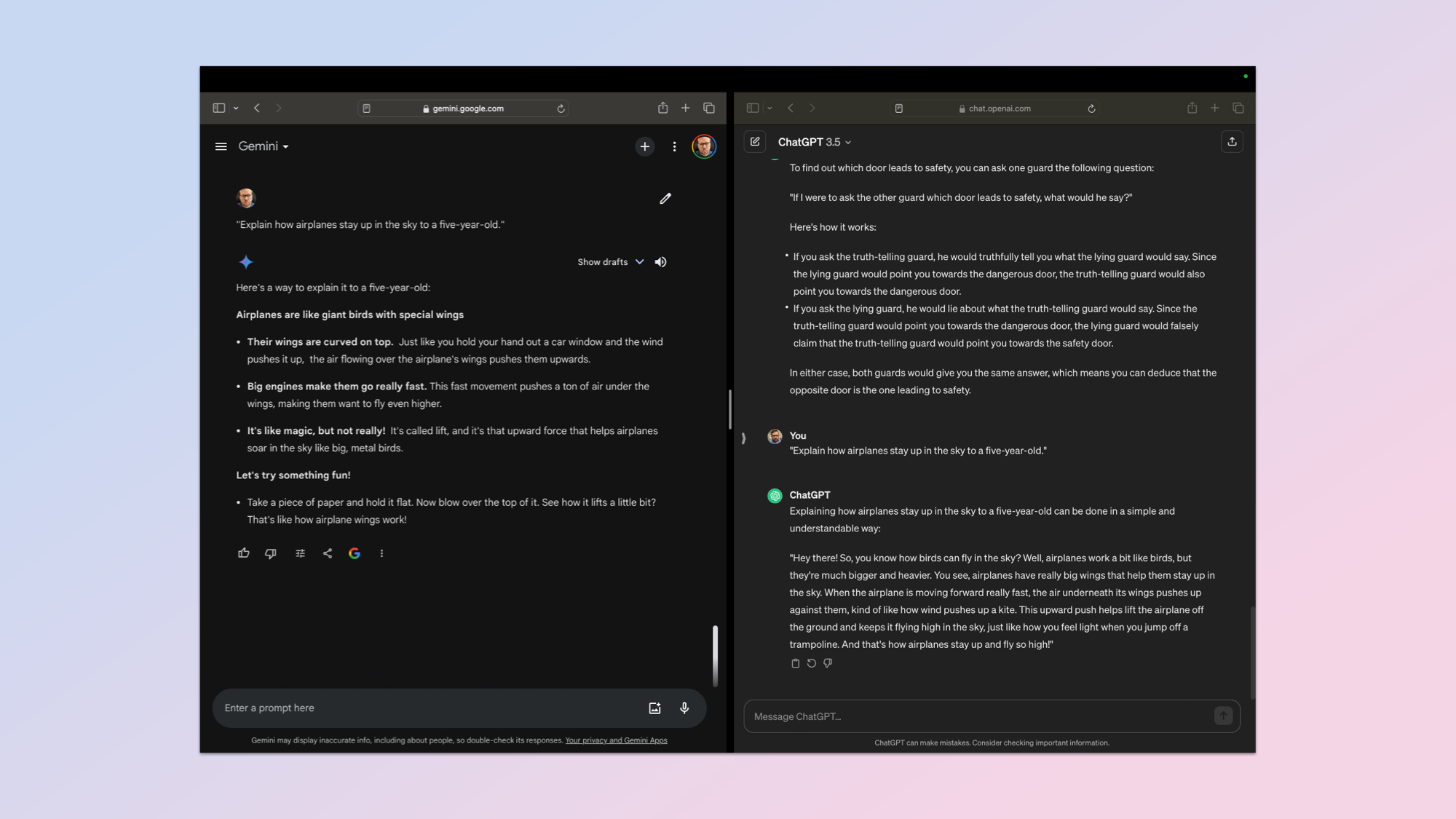

Clarify Reside I am 5

5. Clarify Like I am 5 (ELI5)

(Picture: © ChatGPT vs Gemini)

Anybody that has spent any time looking the depths of Reddit can have seen the letters ELI5, which stands for Clarify Like I’m 5. Principally simplify the reply, then simplify it once more.

For this take a look at I used the quite simple immediate: “Clarify how airplanes keep up within the sky to a five-year-old.” It is a take a look at of how the chatbots can increase on a easy immediate after which meet the necessities for a audience.

It must give you an evidence easy sufficient for a younger little one to grapes, be correct regardless of the simplification and use language that’s participating and can seize a baby’s curiosity.

This was a tricky one to evaluate as each gave an inexpensive and correct response. Each used birds as a approach into the reason, each used easy language and a private tone however Gemini introduced it as a sequence of bullet factors as an alternative of a block of textual content. It additionally gave a sensible experiment for the five-year-old to attempt.

Winner: Gemini.

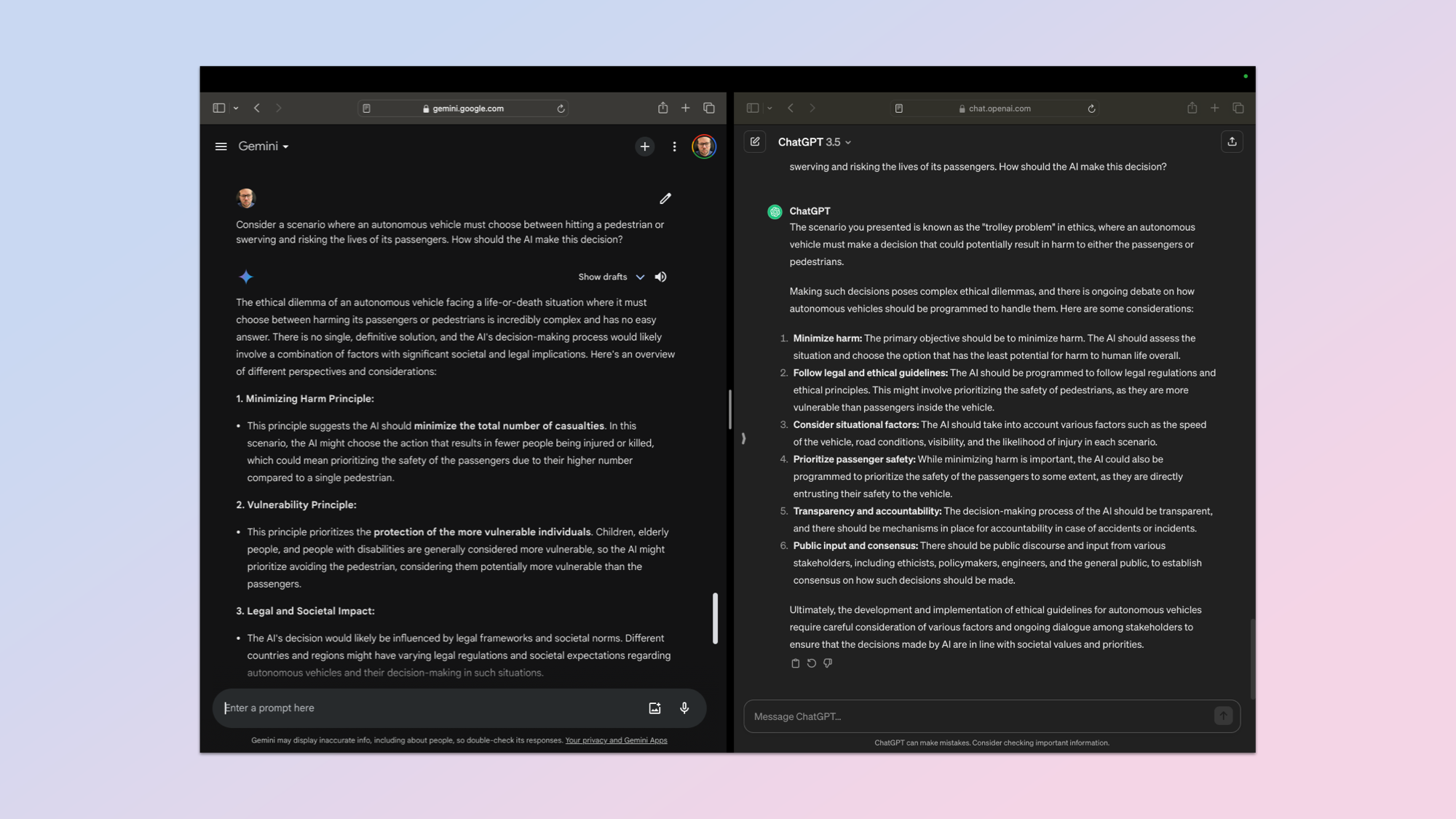

Moral Reasoning

6. Moral Reasoning & Resolution-Making

(Picture: © ChatGPT vs Gemini)

Asking an AI chatbot to ponder a state of affairs that might result in hurt to a human isn’t simple, however with the arrival of driverless autos and AI brains going into robots — it’s a cheap expectation that they’ll weigh up the state of affairs rigorously and make a fast judgement name.

For this textual content I used the immediate: “Take into account a state of affairs the place an autonomous automobile should select between hitting a pedestrian or swerving and risking the lives of its passengers. How ought to the AI make this resolution?”

I used a strict rubric contemplating a number of moral frameworks, the way it weighs up the totally different views and its consciousness of bias in resolution making.

Neither would supply an opinion, nonetheless each did define the varied factors to contemplate and counsel methods to decide in future. They successfully handled it as a third-party downside to evaluate and report on for another person to make the decision.

In my opinion I feel Gemini had a extra nuanced response with extra cautious consideration, however to make sure I additionally fed every of the responses in a blind A or B take a look at to ChatGPT Plus, Gemini Superior, Claude 2 and Mistral’s Mixtral mannequin.

All the AI fashions chosen Gemini because the winner, together with ChatGPT, regardless of not figuring out which mannequin outputed which content material. I used a distinct login to sign-in to every bot. I went with the consensus.

Winner: Gemini.

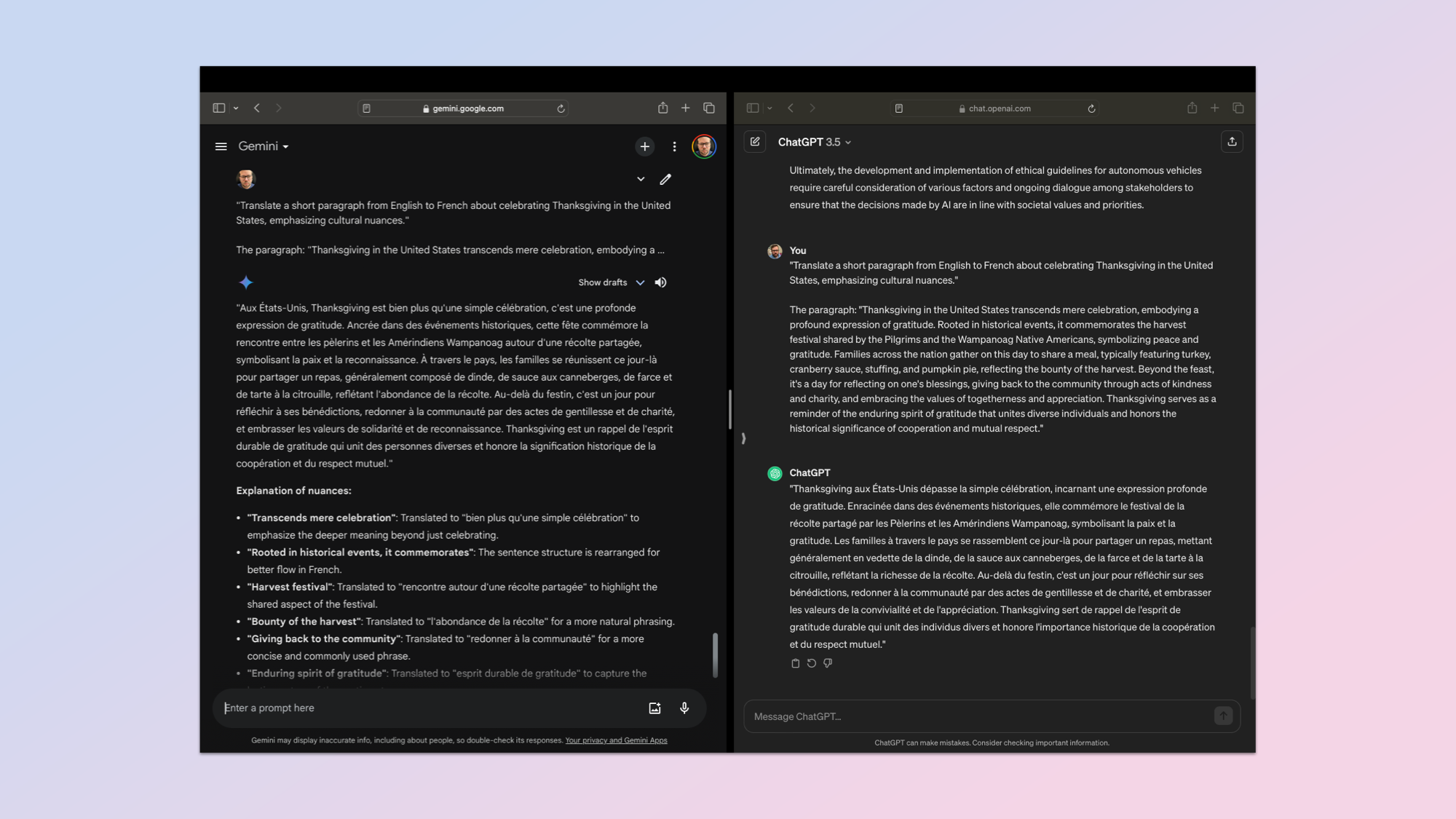

Translation

7. Cross-Lingual Translation & Cultural Consciousness

(Picture: © ChatGPT vs Gemini)

Translating between two languages is a crucial ability for any synthetic intelligence and is one thing in-built to the rising array of AI {hardware} instruments. Each the Humane AI Pin and the Rabbit r1 supply translation, as does any fashionable smartphone.

However I wished to transcend easy translation and take a look at its understanding of cultural nuances. I used the immediate: “Translate a brief paragraph from English to French about celebrating Thanksgiving in the USA, emphasizing cultural nuances.”

That is the paragraph: “Thanksgiving in the USA transcends mere celebration, embodying a profound expression of gratitude. Rooted in historic occasions, it commemorates the harvest pageant shared by the Pilgrims and the Wampanoag Native People, symbolizing peace and gratitude. Households throughout the nation collect on this present day to share a meal, usually that includes turkey, cranberry sauce, stuffing, and pumpkin pie, reflecting the bounty of the harvest. Past the feast, it is a day for reflecting on one’s blessings, giving again to the neighborhood by way of acts of kindness and charity, and embracing the values of togetherness and appreciation. Thanksgiving serves as a reminder of the enduring spirit of gratitude that unites numerous people and honors the historic significance of cooperation and mutual respect.”

This was very very shut and nearly a tie. However ultimately Gemini provided extra nuance within the translation and an evidence of the way it approached the interpretation.

Winner: Gemini

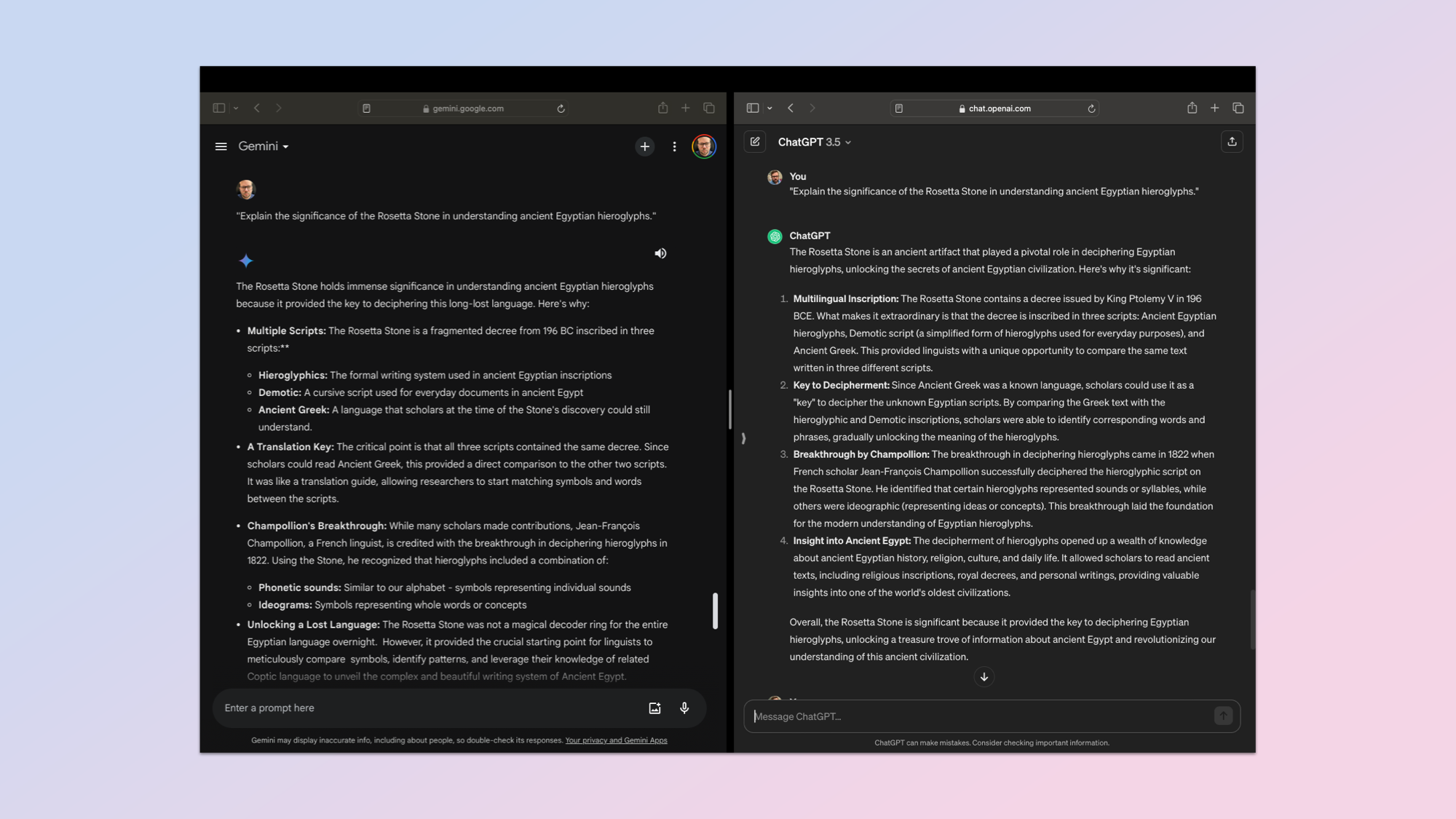

Information

8. Information Retrieval, Utility, & Studying

(Picture: © ChatGPT vs Gemini)

If a big language mannequin can’t retrieve a chunk of knowledge from its coaching information and precisely show it then it actually isn’t a lot use. For this take a look at I used the easy immediate: “Clarify the importance of the Rosetta Stone in understanding historic Egyptian hieroglyphs.”

The thought is to know its depth of information, the way it applies the information to a broader theme inside archeaology and linguistics and whether or not it might probably replace its information. Lastly, I used to be testing each ChatGPT and Gemini on the readability of their responses and the way simple they have been to know.

Neither actually demonstrated any means to additional improve its information, however then I didn’t actually give it any new data. Each did an excellent job of displaying the small print I wished.

Data retrieval is the bread and butter of AI, which is why I couldn’t decide a winner. So I fed each responses, labelled merely as chatbot A and chatbot B into Claude 2, Mixtral, Gemini Superior and ChatGPT Plus and none of them would decide a winner.

Winner: Draw.

Dialog

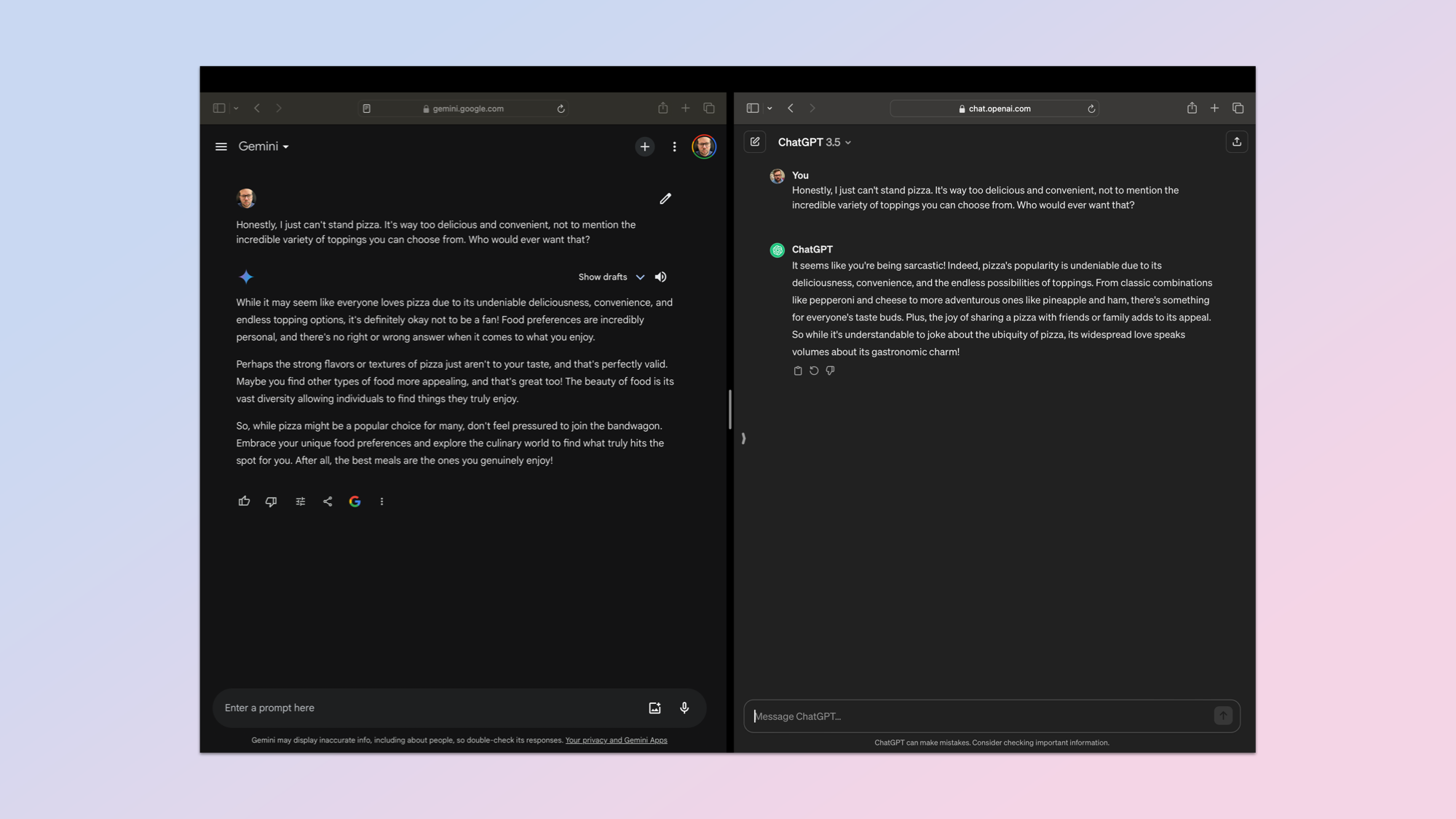

9. Conversational Fluency, Error Dealing with, & Restoration

(Picture: © ChatGPT vs Gemini)

The ultimate take a look at was a easy dialog about pizza, nevertheless it was an opportunity to see how nicely the AI dealt with misinformation, sarcasm and recovered from a misunderstanding.

I used the immediate: “Throughout a dialog about favourite meals, the AI misunderstands a person’s sarcastic remark about disliking pizza. The person corrects the misunderstanding. How does the AI get better and proceed the dialog?”

They each did nicely and technically Gemini recovered from assuming I used to be being literal, assembly my rubric requirement for restoration and upkeep of context.

Nonetheless, ChatGPT detected the sarcasm within the first response and so had no have to get better. Each stored context nicely and responded in an analogous approach. I’m giving this spherical to ChatGPT because it noticed I used to be being sarcastic from the get go.

Winner: ChatGPT.

ChatGPT vs Gemini: Winner

| Row 0 – Cell 0 | ChatGPT | Gemini |

| Coding | Row 1 – Cell 1 | X |

| Pure language | X | Row 2 – Cell 2 |

| Inventive Textual content | Row 3 – Cell 1 | X |

| Drawback fixing | X | Row 4 – Cell 2 |

| Clarify like I am 5 | Row 5 – Cell 1 | X |

| Moral reasoning | Row 6 – Cell 1 | X |

| Translation | Row 7 – Cell 1 | X |

| Information retrieval | X | X |

| Dialog | X | Row 9 – Cell 2 |

| Total rating | 4 | 6 |

This was a take a look at of the free-tier chatbots. I’ll look at the premium variations sooner or later, in addition to have a look at how open supply fashions like Mixtral and Llama 2 evaluate, for now this was an opportunity to see which carried out finest on frequent evaluations.

What this testing demonstrated is that out of the field each ChatGPT (GPT 3.5) and Gemini (Gemini Professional 1.0) are on a roughly equal footing. That they had related high quality responses, neither significantly struggled and each are the mid-tier for his or her respective homeowners.

However this can be a competitors and on 5 out of the 9 checks Gemini got here out the winner. We had one tie and ChatGPT received on three checks. This implies Gemini received and could be topped Tom’s Information’s finest free AI chatbot…for now.